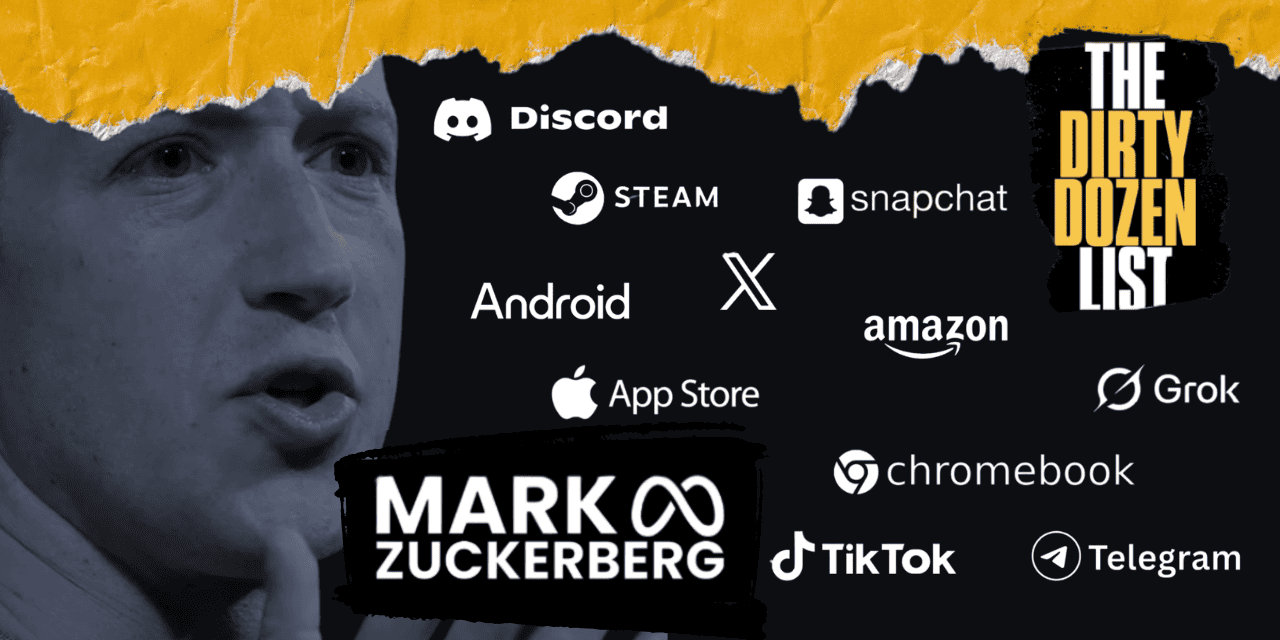

Zuckerberg, Grok, Messaging Platforms Dominate 2026 Dirty Dozen List

The National Center on Sexual Exploitation (NCOSE) released their annual Dirty Dozen List this week, naming 12 well-known companies which facilitate, enable or profit from the sexual exploitation and abuse of children.

This year’s list features the campaign’s first individual — Mark Zuckerberg.

“As the CEO of Meta and a major shareholder in the company, [Mark Zuckerberg] continues to create an enabling environment for sexual abuse and sexual exploitation to flourish,” NCOSE President and CEO Marcel van der Watt explained the social media mogul’s inclusion Tuesday, during an event unveiling the list.

Haley McNamara, NCOSE’s executive director and chief strategy officer continued:

McNamara specifically noted:

- The recent roll out of Meta’s AI chatbot, which included design features allowing it to engage in sexual conversations with minors.

- The recent roll out of Meta’s AI chatbot, which included design features allowing it to engage in sexual conversations with minors.

- The recent roll out of Meta’s AI chatbot, which included design features allowing it to engage in sexual conversations with minors.

- Meta’s chronically ineffective teen safety tools.

- A previous Instagram policy requiring an account be flagged for sex trafficking 17 times before it be removed.

Van der Watt and McNamara say NCOSE will no longer engage Meta in conversations about making their platforms safer.

“We don’t think they care enough about protecting children to offer them recommendations,” McNamara explained.

Instead, NCOSE reiterated its call to end Section 230 of the Communication Decency Act, which effectively immunizes Meta and other social media companies from legal liability for content posted to their platforms.

Until recently, Meta and its compatriots successfully blamed any harm children suffered from social media on content they viewed, rather the design of the platforms themselves.

Last week, juries in New Mexico and California decided social media companies can be held liable for creating addictive, defective platforms. Despite these hopeful legal rulings, Section 230 continues to provide substantial legal cover to online content hosts which expose children to harmful content.

In addition to Zuckerberg, NCOSE’s 2026 Dirty Dozen list includes:

xAI’s Grok is the Dirty Dozen campaign’s first large language learning model. Its chatbot “companions” can conduct sexual conversations and its image generation feature can create sexual images.

Predictably, these features can — and do — go horribly awry. When McNamara experimented with Grok’s adult companions, she found the characters willing to “engage in, normalize and promote sexual fantasies around themes of rape, sexual violence, sex trafficking and even, over the course of a conversation with multiple prompts, child sexual abuse.”

Even Good Rudi, a Grok chatbot marketed for children, would engage in graphic descriptions of sexual encounters if prompted, NCOSE researchers found.

Grok’s image generator can create fake images of real people, including, in sexual situations. Both types of images are illegal under the Take It Down Act, which President Donald Trump signed into law in May 2025.

X, formerly Twitter, is one of the Dirty Dozen’s most consistent call outs, appearing on every list from 2017 to 2023.

NCOSE named X again this year for its indiscriminate use of Section 230 to shield sexually explicit and abusive content, including AI deepfakes and revenge porn.

“Its policies and lack of enforcement make X a safe harbor for abusers and a nightmare for survivors,” NCOSE writes, providing evidence showing the company consistently fails to promptly remove explicit photos of underage sextortion victims.

Steam is an online video game marketplace where kids can download anything from ultra popular franchises like Halo to thousands of independent games.

Steam includes access to sexually explicit, fetishistic and violent games. NCOSE found the platforms parental controls do not adequately shield kids from this harmful content.

“Their safety controls are not strict enough to prevent children from accessing certain pieces of material that, in my opinion, they have no business accessing whatsoever,” Nicolas Moy, a cybersecurity expert and NCOSE researcher, explained during the Dirty Dozen launch event.

“It got me to the point where I said to my son, ‘We’re not going to have this anymore.’”

Steam’s porous parental controls only work when kids tell the app their true age. According to McNamara, its just as easy for users to pretend they’re 18.

“Our researchers found you basically just have to click a button to claim you are over the age of 18, and then you can be exposed to this sexually violent and abusive material,” she concluded.

NCOSE flagged Amazon for allowing the blatant sale child sex dolls on its platform — a practice which not only violates its own rules but that of several states and countries.

In less than 20 minutes, a NCOSE researcher found more than 20 sex dolls for sale on Amazon with “unmistakably child-like faces, clothing, body proportions and marketing terms such as ‘young,’ ‘petite,’ ‘little,’ or even ‘sex doll 14.’”

“Amazon’s failure to detect or prevent these listings is not a small oversight,” NCOSE writes. “It is a systemic breakdown that enables sexual exploitation materials to flourish on one of the world’s largest retails platforms.”

Amazon is no stranger to the Dirty Dozen List, appearing seven times from 2016 to 2026.

Android’s operating system runs on as many as 75% of the world’s smartphones. But, unlike Apple, the company continues to resist creating child-safety features at the operating system level.

“By relying almost entirely on optional parental tools, Android fails to provide built-in, proactive safeguards, forcing children to navigate high-risk digital environments on their own.”

Android’s lack of even the most basic protections leaves children with disengaged parents — parents who don’t care enough to implement parental controls — most vulnerable to online exploitation and abuse, NCOSE asserts.

The Apple App Store allows developers to rate their own apps, leading to several instances of mature or sexual apps being rated appropriate for kids.

Most recently, NCOSE reports, AI deepfakes pornography apps slipped past Apple’s review process by describing themselves as photo editing tools to Apple and revealing their true purpose — to create sexually explicit deepfakes with AI — on social media.

Last year, federal legislators introduced the App Store Accountability Act, a law which would make app stores and developers accountable to child protection laws.

The Apple App Store has appeared on NCOSE’s Dirty Dozen List since 2023.

NCOSE estimates as many as 80% of public-school districts give their students Google Chromebooks. But the lightweight laptops’ come with almost no default protections, reportedly giving children access to pornographic and violent content and leaving them vulnerable to predators.

Administrators can purchase equipment to make the laptops safer, if they can scrounge the funds. Even when they do, NCOSE says, the tools are difficult to use and require constant upkeep.

NCOSE removed Chromebook from the 2021 Dirty Dozen List after Google “announced changes to its default safety settings and pledged to prioritize child safety.”

“But those promises have fallen heartbreakingly short,” NCOSE writes.

So short, in fact, that the company recommends Google “redesign Chromebooks with a safety-first approach, including robust, default and locked safety settings that limit internet access to vetted, educational, age-appropriate content.”

Discord is a messaging app with features that make it attractive to sexual predators.

“Sexual abusers return to Discord again and again thanks to this company’s lax rule enforcement and dangerous design,” NCOSE writes, citing its private messaging channels and sparse default protections for minors.

NCOSE further notes that Discord relies on users to report violations of its rules before it takes action. Reactive approaches like these, it writes, “can create an environment where exploiters easily connect and share abusive material, knowing they are unlikely to be reported by others with similar intentions.”

NCOSE recommends Discord “ban minors from using the platform until it is radically transformed.”

The social media app became infamous for its private messages, which disappear after 24 hours.

“This is a platform that, in its very architecture, appears to intentionally allow the creation and sharing of sexually explicit material, including of minors,” NCOSE writes.

“Every day, sextortion, child sexual abuse material (CSAM) and images used to blackmail and traffic children continue to proliferate — a direct result of deliberate design choices.”

NCOSE recommends Snapchat bar children under 16 years old from the app due to its high volume of sexual content and the likelihood of exploitation.

Telegram is a private messaging app infamous for facilitating sexual exploitation and abuse.

“Short of historic infrastructure pivot, Telegram is so dangerous and such a threat to so many people that we believe it should be shut down,” NCOSE assesses, continuing:

NCOSE further cites a Wired article titled “Crypto-funded human trafficking is exploding,” which reads:

TikTok first appeared on the Dirty Dozen List in 2020. It returns to the list this year after NCOSE says it stopped pursuing safety measures and, as a result, “created an environment where predators thrive, using livestreams, comments and private messages to groom and exploit minors.”

After less than 15 minutes on TikTok, a NCOSE researcher posing as a 15-year-old discovered more than 50 posts and comments with “high risk indications of child sexual abuse material trading and networking.”

NCOSE also cited internal documents showing TikTok’s livestream algorithm incentivizes users paying other users to stream sexual content.

Additional Articles and Resources

Juries in California, New Mexico Rule Against Meta

New Mexico Accuses Meta of Egregious Harm to Children in Court Case

Zuckerberg Implicated in Meta’s Failures to Protect Children

Instagram Content Restrictions Don’t Work, Tests Show

X’s ‘Grok’ Generates Pornographic Images of Real People on Demand

AI Company Releases Sexually-Explicit Chatbot on App Rated Appropriate for 12 Year Olds

TikTok Dangerous for Minors — Leaked Docs Show Company Refuses to Protect Kids

ABOUT THE AUTHOR

Emily Washburn is a staff reporter for Daily Citizen at Focus on the Family and regularly writes stories about politics and noteworthy people. She previously served as a staff reporter for Forbes Magazine, editorial assistant, and contributor for Discourse Magazine and Editor-in-Chief of the newspaper at Westmont College, where she studied communications and political science. Emily has never visited a beach she hasn’t swam at, and is happiest reading a book somewhere tropical.